Rigid Body Collision

Introduction

This section will introduce the fundamentals of rigid body collision. For more advanced topics also refer to the section Advanced Collision Detection.

Shapes

Shapes describe the spatial extent and collision properties of actors. They are used for three purposes within PhysX:

Intersection tests that determine the contacting features of rigid objects.

Scene query tests such as raycasts, overlaps and sweeps.

Defining trigger volumes that generate notifications when other shapes intersect with them.

Shapes are reference counted, see Reference Counting.

Each shape contains a PxGeometry object and a reference to a PxMaterial, which must both be specified upon creation.

The following code creates a shape with a sphere geometry and a specific material:

PxShape* shape = physics.createShape(PxSphereGeometry(1.0f), myMaterial, true);

myActor.attachShape(*shape);

shape->release();

The method PxRigidActorExt::createExclusiveShape() is equivalent to the three lines above.

Note

for reference counting behavior of deserialized shapes refer to Reference Counting of Deserialized Objects.

The parameter ‘true’ to PxPhysics::createShape() informs the SDK that the shape will not be shared with other actors. You can use shape sharing to reduce the memory costs of your simulation when you have many actors with identical geometry, but shared shapes have a very strong restriction: you cannot update the attributes of a shared shape while it is attached to an actor.

Optionally you may configure a shape by specifying shape flags of type PxShapeFlags. By default a shape is configured as:

a simulation shape (enabled for contact generation during simulation)

a scene query shape (enabled for scene queries)

being visualized if debug rendering is enabled

When a geometry object is specified for a shape, the geometry object is copied into the shape. There are some restrictions on which geometries may be specified for a shape, depending on the shape flags and the type of the parent actors.

TriangleMesh, HeightField and Plane geometries are not supported for simulation shapes that are attached to dynamic actors, unless the dynamic actors are configured to be kinematic.

TriangleMesh and HeightField geometries are not supported for trigger shapes.

See the following sections for more details.

Detach the shape from the actor as follows:

myActor.detachShape(*shape);

Simulation Shapes and Scene Query Shapes

Shapes may be independently configured to participate in either or both of scene queries and contact tests. This is controlled by PxShapeFlag::eSIMULATION_SHAPE and PxShapeFlag::eSCENE_QUERY_SHAPE. By default, a shape will participate in both.

The following pseudo-code configures a PxShape instance so that it no longer participates in shape pair intersection tests:

void disableShapeInContactTests(PxShape* shape)

{

shape->setFlag(PxShapeFlag::eSIMULATION_SHAPE, false);

}

A PxShape instance can be configured to participate in shape pair intersection tests as follows:

void enableShapeInContactTests(PxShape* shape)

{

shape->setFlag(PxShapeFlag::eSIMULATION_SHAPE, true);

}

To disable a PxShape instance from scene query tests:

void disableShapeInSceneQueryTests(PxShape* shape)

{

shape->setFlag(PxShapeFlag::eSCENE_QUERY_SHAPE, false);

}

Finally, a PxShape instance can be re-enabled in scene query tests:

void enableShapeInSceneQueryTests(PxShape* shape)

{

shape->setFlag(PxShapeFlag::eSCENE_QUERY_SHAPE, true);

}

Note

If the movement of the shape’s actor does not need to be controlled by the simulation at all, i.e., the shape is used for scene queries only and gets moved manually if necessary, then memory can be saved by additionally disabling simulation on the actor itself (see PxActorFlag::eDISABLE_SIMULATION).

Kinematic Triangle Meshes (Planes, Heightfields)

It is possible to create a kinematic PxRigidDynamic which can have a triangle mesh (plane, heightfield) shape. If this shape has a simulation shape flag, this actor must stay kinematic. If you change the flag to not simulated, you can switch even the kinematic flag.

To setup kinematic triangle mesh see following code:

PxRigidDynamic* meshActor = getPhysics().createRigidDynamic(PxTransform(1.0f));

PxShape* meshShape;

if(meshActor)

{

meshActor->setRigidDynamicFlag(PxRigidDynamicFlag::eKINEMATIC, true);

PxTriangleMeshGeometry triGeom;

triGeom.triangleMesh = triangleMesh;

meshShape = PxRigidActorExt::createExclusiveShape(*meshActor,triGeom,

defaultMaterial);

getScene().addActor(*meshActor);

}

To switch a kinematic triangle mesh actor to a dynamic actor:

PxRigidDynamic* meshActor = getPhysics().createRigidDynamic(PxTransform(1.0f));

PxShape* meshShape;

if(meshActor)

{

meshActor->setRigidDynamicFlag(PxRigidDynamicFlag::eKINEMATIC, true);

PxTriangleMeshGeometry triGeom;

triGeom.triangleMesh = triangleMesh;

meshShape = PxRigidActorExt::createExclusiveShape(*meshActor, triGeom,

defaultMaterial);

getScene().addActor(*meshActor);

PxConvexMeshGeometry convexGeom = PxConvexMeshGeometry(convexBox);

convexShape = PxRigidActorExt::createExclusiveShape(*meshActor, convexGeom,

defaultMaterial);

Dynamic Triangle Meshes with SDFs

Dynamic triangle meshes are only supported if they have a SDF (Signed Distance Field). The SDF can optionally be generated during the cooking process. Note that they are only available when using the GPU collision detection pipeline. It is recommended to use sparse SDFs because in their simplest form (dense SDF) they use a lot of memory. Collision detection performance of sparse and dense sdfs is almost identical. Please note that on static actors, SDFs are not supported while on kinematic and dynamic actors they work well in most cases. Adding a dynamic triangle mesh to a scene works basically identical to adding a kinematic mesh. The main difference is that the PxTriangleMesh must have been cooked with a SDF and that the mass and other dynamics related properties must be populated with valid values because they are important for the behavior of a dynamic object:

void addDynamicTriangleMeshInstance(const PxTransform& transform, PxTriangleMesh* mesh)

{

PxRigidDynamic* dyn = gPhysics->createRigidDynamic(transform);

dyn->setLinearDamping(0.2f);

dyn->setAngularDamping(0.1f);

PxTriangleMeshGeometry geom;

geom.triangleMesh = mesh;

geom.scale = PxVec3(0.1f, 0.1f, 0.1f);

dyn->setRigidBodyFlag(PxRigidBodyFlag::eENABLE_GYROSCOPIC_FORCES, true);

dyn->setRigidBodyFlag(PxRigidBodyFlag::eENABLE_SPECULATIVE_CCD, true);

PxShape* shape = PxRigidActorExt::createExclusiveShape(*dyn, geom, *gMaterial);

shape->setContactOffset(0.1f);

shape->setRestOffset(0.02f);

PxReal density = 100.f;

PxRigidBodyExt::updateMassAndInertia(*dyn, density);

gScene->addActor(*dyn);

dyn->setSolverIterationCounts(50, 1);

dyn->setMaxDepenetrationVelocity(5.f);

}

If SDF collisions don’t produce satisfactory results, it’s often possible to tweak some parameters to improve the situation.

Increase the contact offset

Larger contact offsets allow the solver to react a bit earlier to collisions potentially happening in subsequent timesteps. Too large contact offsets will slow down the collision detection performance, so it’s usually an iterative process of finding a good value

Make objects heavier, within reasonable limits

Objects that are extremely lightweight or if objects with very different mass come in contact, the collision response might not be perfect since those cases make convergence of the solver more difficult

Adjust the SDF resolution

A too low SDF resolution can lead to situations where very thin parts of the mesh don’t collide since the SDF cannot represent/capture them

A too high SDF resolution might lead to increased memory consumption and a bit slower collision detection performance

Reduce the maximal depenetration velocity

Makes the collision response a bit slower and can help to avoid overshooting

Increase the number of position iterations

Gives the solver more iterations to improve convergence

Increase friction

Helps that objects in contact come to rest

Use meshes with a good tessellation when generating the SDF

Watertight triangle meshes without self intersections or other defects simplify the cooking process. In case the mesh has holes, the cooker closes them but the closing surface will be defined by the cooker

Reduce the timestep of the physics simulation

This is only recommended if all other adjustments don’t lead to good results

Trigger Shapes

Trigger shapes play no part in the simulation of the scene (though they can be configured to participate in scene queries). Instead, their role is to report that there has been an overlap with another shape. Contacts are not generated for the intersection, and as a result contact reports are not available for trigger shapes. Further, because triggers play no part in the simulation, the SDK will not allow the the PxShapeFlag::eSIMULATION_SHAPE and PxShapeFlag::eTRIGGER_SHAPE flags to be raised simultaneously; that is, if one flag is raised then attempts to raise the other will be rejected, and an error will be passed to the error stream.

Trigger shapes can be used to implement sensors. They could be used for example to determine if a player has reached a checkpoint zone, or perhaps to automatically open a door when an object moves in front of it. In such examples the region of space around the checkpoint or the door would be represented as an actor with a unique shape (often a box or a sphere) configured as a trigger shape:

PxShape* sensorShape;

gSensorActor->getShapes(&sensorShape, 1);

sensorShape->setFlag(PxShapeFlag::eSIMULATION_SHAPE, false);

sensorShape->setFlag(PxShapeFlag::eTRIGGER_SHAPE, true);

The overlaps with trigger shapes are reported through the user-defined PxSimulationEventCallback object, specifically through the implementation of PxSimulationEventCallback::onTrigger():

void MySimulationEventCallback::onTrigger(PxTriggerPair* pairs, PxU32 count)

{

for(PxU32 i=0; i<count; i++)

{

// ignore pairs when shapes have been deleted

if(pairs[i].flags & (PxTriggerPairFlag::eREMOVED_SHAPE_TRIGGER | PxTriggerPairFlag::eREMOVED_SHAPE_OTHER))

continue;

// Detect for example that a player entered a checkpoint zone

if((&pairs[i].otherShape->getActor() == gPlayerActor) &&

(&pairs[i].triggerShape->getActor() == gSensorActor))

{

gCheckpointReached = true;

}

}

}

The code above iterates through all pairs of overlapping shapes that involve a trigger shape. If it is found that the checkpoint sensor has been touched by the player then the flag gCheckpointReached is set to true.

Broad-phase Collision Detection

The broad phase is the first part of the collision pipeline. It is called broad phase because it detects overlaps between axis-aligned bounding boxes, i.e it only reports potential collisions rather than actual collisions. The actual collisions are detected by the next phase in the physics pipeline, named narrow phase.

Broad-phase Algorithms

PhysX supports several broad-phase algorithms:

sweep-and-prune (SAP)

multi box pruning (MBP)

automatic box pruning (ABP)

parallel automatic box pruning (PABP)

GPU broadphase (GPU)

PxBroadPhaseType::eSAP is a good generic choice with great performance when many objects are sleeping. Performance can degrade significantly though, when all objects are moving, or when large numbers of objects are added to or removed from the broad-phase. This algorithm does not need world bounds to be defined in order to work.

PxBroadPhaseType::eMBP is an algorithm introduced in PhysX 3.3. It is an alternative broad-phase algorithm that does not suffer from the same performance issues as eSAP when all objects are moving or when inserting large numbers of objects. However its generic performance when many objects are sleeping might be inferior to eSAP, and it requires users to define world bounds (broadphase regions) in order to work.

PxBroadPhaseType::eABP is a revisited implementation of PxBroadPhaseType::eMBP introduced in PhysX 4. It automatically manages world bounds and broad-phase regions, thus offering the convenience of PxBroadPhaseType::eSAP coupled to the performance of PxBroadPhaseType::eMBP. While PxBroadPhaseType::eSAP can remain faster when most objects are sleeping and PxBroadPhaseType::eMBP can remain faster when it uses a large number of properly-defined regions, PxBroadPhaseType::eABP often gives the best performance on average and the best memory usage. It is a good default choice for the broadphase.

PxBroadPhaseType::ePABP is a revisited implementation of PxBroadPhaseType::eABP introduced in PhysX 5. It is the same as PxBroadPhaseType::eABP, but taking advantage of multiple threads. Because the single-threaded ABP implementation is very fast on its own, its multithreaded version is only faster for large scenes, not necessarily for small ones. It also uses more memory.

PxBroadPhaseType::eGPU is a GPU implementation of the incremental sweep and prune approach. Additionally, it uses a ABP-style initial pair generation approach to avoid large spikes when inserting shapes. It not only has the advantage of traditional SAP approach which is good for when many objects are sleeping, but due to being fully parallel, it also is great when lots of shapes are moving or for runtime pair insertion and removal.

The desired broad-phase algorithm is controlled by PxSceneDesc::broadPhaseType.

Regions of Interest

A region of interest is a world-space AABB around a volume of space controlled by the broad-phase. Objects contained inside those regions are properly handled by the broad-phase. Objects falling outside of those regions lose all collision detection. Ideally those regions should cover the whole simulation space, while limiting the amount of covered empty space.

Regions can overlap, although for maximum efficiency it is recommended to minimize the amount of overlap between regions as much as possible. Note that two regions whose AABBs just touch are not considered overlapping. For example the PxBroadPhaseExt::createRegionsFromWorldBounds() helper function creates a number of non-overlapping region bounds by simply subdividing a given world AABB into a regular 2D grid.

Regions can be defined by the PxBroadPhaseRegion structure, along with a user-data assigned to them. They can be defined at scene creation time or at runtime using PxScene::addBroadPhaseRegion(). The SDK returns handles assigned to the newly created regions, that can be used later to remove regions using PxScene::removeBroadPhaseRegion().

A newly added region may overlap already existing objects. The SDK can automatically add those objects to the new region, if the populateRegion parameter from the PxScene::addBroadPhaseRegion() call is set. However this operation is not cheap and might have a high impact on performance, especially when several regions are added in the same frame. Thus, it is recommended to disable it whenever possible. The region would then be created empty, and it would only be populated either with objects added to the scene after the region has been created, or with previously existing objects when they are updated (i.e. when they move).

Note that only PxBroadPhaseType::eMBP requires regions to be defined. The other algorithms do not. This information is captured within the PxBroadPhaseCaps structure, which lists information and capabilities of each broad-phase algorithm. This structure can be retrieved with a call to PxScene::getBroadPhaseCaps().

Runtime information about current regions can be retrieved using the PxScene::getNbBroadPhaseRegions() and PxScene::getBroadPhaseRegions() functions.

The maximum number of regions is currently limited to 256.

Broad-phase Callback

A callback for broad-phase-related events can be defined within the PxSceneDesc structure. This PxBroadPhaseCallback object will be called when objects are found out of the specified regions of interest, i.e. “out of bounds”. The SDK disables collision detection for those objects. It is re-enabled automatically as soon as the objects re-enter a valid region.

It is up to users to decide what to do with out-of-bounds objects. Typical options are:

delete the objects

let them continue their motion without collisions until they re-enter a valid region

artificially teleport them back to a valid place

This callback is mainly used for PxBroadPhaseType::eMBP.

Interactions

The SDK internally creates an interaction object for each overlapping pair reported by the broad-phase. These objects are not only created for pairs of colliding rigid bodies, but also for pairs of overlapping triggers. Generally speaking users should assume that such objects are created regardless of the involved objects’ types (rigid body, trigger, etc) and regardless of involved PxFilterFlag flags.

The PhysX broadphase operates on shapes, not on actors. This means that an interaction is created for a pair of shapes, and two colliding compound actors could internally generate multiple interaction objects. Aggregates can be used to reduce the number of interactions in this case (see Aggregates).

Collision Filtering

Collision filtering is the mechanism used to discard some overlapping pairs returned by the broadphase. Technically, filtering could be implemented with a single user callback - asking users whether or not a pair should be kept or dismissed. However going from the SDK to users’ code for each pair can quickly become expensive, and sometimes it is not even possible - for example when the broadphase runs on the GPU. Thus, PhysX implements collision filtering in several stages of the physics pipeline. From cheapest & least flexible to most expensive & most flexible, filtering happens:

during the broadphase, using

PxPairFilteringMode.during or after the broadphase, using

PxSimulationFilterShader.after the broadphase, using

PxSimulationFilterCallback.

PxPairFilteringMode

This is the most efficient way to discard unwanted pairs (at the earliest point in the pipeline), but it is also the least flexible way: PxPairFilteringMode is mainly used to filter out kinematic pairs directly during the broadphase. A kinematic pair is a pair that contains one or more kinematic object. In the context of collision filtering we are mostly interested in:

kinematic-vs-kinematic interactions, i.e. when two kinematic objects overlap. What happens for these pairs is controlled by

PxSceneDesc::kineKineFilteringMode.kinematic-vs-static interactions, i.e. when a kinematic object overlaps a static object. What happens for these pairs is controlled by

PxSceneDesc::staticKineFilteringMode.

As previously mentioned, an interaction object is internally created for each pair reported by the broadphase. The available pair filtering modes control what happens here:

PxPairFilteringMode::eKEEPcreates a regular interaction object for the pair. The pair will be kept, potentially sent to user callbacks, etc.PxPairFilteringMode::eSUPPRESScreates a placeholder interaction object for the pair. The pair will be ignored, but it can later be switched to a regular interaction.PxPairFilteringMode::eKILLignores the pair and does not create an interaction. The pair can eventually be switched to a regular interaction later with aPxScene::resetFiltering()call, but this is an expensive operation.

These modes are not flexible: they are defined at scene creation time for all kinematic pairs and cannot be changed later. Thus it is mostly useful if you know you will never need kinematic pairs out of the broadphase, in which case using PxPairFilteringMode::eKILL for both static-kinematic and kinematic-kinematic pairs is the most efficient thing to do. It is more efficient than the subsequent filter shader or filter callback because the filtering happens directly within the broadphase: discarded pairs are not returned by the broadphase, interaction objects are not created for them, etc. For comparison, the filter shader described in the next section could also potentially avoid creating these interaction objects, but the pairs would still have to be reported by the broadphase to the next stage of the pipeline.

PxSimulationFilterShader

The most common way to implement collision filtering is via a PxSimulationFilterShader, which is a reasonably fast and flexible mechanism. A filter shader is a standalone user-defined C function called for all pairs of shapes whose axis aligned bounding boxes in world space are found to intersect for the first time. All behavior beyond that is determined by what the shader returns.

In theory such a shader can run on the GPU and thus it should not reference any memory other than arguments of the function and its own local stack variables. Such a function looks like this:

PxFilterFlags FilterShaderExample(

PxFilterObjectAttributes attributes0, PxFilterData filterData0,

PxFilterObjectAttributes attributes1, PxFilterData filterData1,

PxPairFlags& pairFlags, const void* constantBlock, PxU32 constantBlockSize)

{

// let triggers through

if(PxFilterObjectIsTrigger(attributes0) || PxFilterObjectIsTrigger(attributes1))

{

pairFlags = PxPairFlag::eTRIGGER_DEFAULT;

return PxFilterFlag::eDEFAULT;

}

// generate contacts for all that were not filtered above

pairFlags = PxPairFlag::eCONTACT_DEFAULT;

// trigger the contact callback for pairs (A,B) where

// the filtermask of A contains the ID of B and vice versa.

if((filterData0.word0 & filterData1.word1) && (filterData1.word0 & filterData0.word1))

pairFlags |= PxPairFlag::eNOTIFY_TOUCH_FOUND;

return PxFilterFlag::eDEFAULT;

}

And it would be passed to the scene at creation time via PxSceneDesc::filterShader, like this:

PxSceneDesc sceneDesc;

...

sceneDesc.filterShader = sceneDesc;

As previously mentioned, the shader filter function should only use the arguments passed to the function to operate. If you need access to shared/global data structures, consider using a PxSimulationFilterCallback instead. These arguments include PxFilterObjectAttributes and PxFilterData for the two objects, and a constant block of memory. Note that the pointers to the two objects are NOT passed, because dereferencing them would mean accessing external memory to the shader, which could potentially crash if the shader code is uploaded to a remote processor.

PxFilterObjectAttributes and PxFilterData are intended to contain all the useful information that one could quickly glean from the pointers. PxFilterObjectAttributes are 32 bits of data, that encode the type of object, for example PxFilterObjectType::eRIGID_STATIC or PxFilterObjectType::eRIGID_DYNAMIC. Additionally, it lets you find out if the object is kinematic or a trigger without accessing the PxShape flags (using the PxFilterObjectIsKinematic() and PxFilterObjectIsTrigger() helper functions).

Each PxShape contains a PxFilterData. This is 128 bits of user defined data that can be used to store application specific information related to collision filtering. This is the other variable that is passed to the filter shader for each object, and again this is done so that the data is available to the shader without the need to call PxShape::getSimulationFilterData().

The constant block is a chunk of per-scene global information that the application can give to the shader to operate on. You will want to use this to encode rules about what to filter and what not.

Finally, PxPairFlags is an output parameter, like the return value PxFilterFlags, though used slightly differently. PxFilterFlags tells the SDK if it should:

ignore the pair for good (

PxFilterFlag::eKILL)ignore the pair while it is overlapping but ask again when filtering related data changes for one of the objects (

PxFilterFlag::eSUPPRESS)or call the low performance but more flexible CPU callback if the shader cannot decide (

PxFilterFlag::eCALLBACK).

PxPairFlags specifies additional flags that stand for actions that the simulation should take in the future for this pair. For example, PxPairFlag::eNOTIFY_TOUCH_FOUND means notify the user when the pair really starts to touch, not just when the objects’ bounds overlap.

Let us look at what the above shader does:

// let triggers through

if(PxFilterObjectIsTrigger(attributes0) || PxFilterObjectIsTrigger(attributes1))

{

pairFlags = PxPairFlag::eTRIGGER_DEFAULT;

return PxFilterFlag::eDEFAULT;

}

This means that if either object is a trigger, then perform default trigger behavior (notify the application about start and end of touch), and otherwise perform ‘default’ collision detection between them.:

// generate contacts for all that were not filtered above

pairFlags = PxPairFlag::eCONTACT_DEFAULT;

// trigger the contact callback for pairs (A,B) where

// the filtermask of A contains the ID of B and vice versa.

if((filterData0.word0 & filterData1.word1) && (filterData1.word0 & filterData0.word1))

pairFlags |= PxPairFlag::eNOTIFY_TOUCH_FOUND;

return PxFilterFlag::eDEFAULT;

This says that for all other objects, perform ‘default’ collision handling. In addition, there is a rule based on the filterDatas that asks for touch notifications for particular pairs. What the shader does with filterData0 and filterData1 is entirely user-defined, and the above is just an example of what it could look like. To understand the example, let us imagine an artificial scenario where we have different types of objects:

struct FilterGroup

{

enum Enum

{

ePLAYER = (1 << 0),

eOBJECT1 = (1 << 1),

eOBJECT2 = (1 << 2),

eHEIGHTFIELD = (1 << 3),

};

};

An app could identify each shape’s type using PxFilterData::word0. Then it could put a bit mask that specifies each type of object that should generate a report when touched by an object of type word0 into word1:

void setupFiltering(PxShape* shape, PxU32 filterGroup, PxU32 filterMask)

{

PxFilterData filterData;

filterData.word0 = filterGroup; // word0 = own ID

filterData.word1 = filterMask; // word1 = ID mask to filter pairs that trigger a contact callback

shape->setSimulationFilterData(filterData);

}

This sets up the PxFilterData for a shape, and it could be used like this:

setupFiltering(playerShape, FilterGroup::ePLAYER, FilterGroup::eOBJECT1 | FilterGroup::eOBJECT2);

setupFiltering(object1Shape, FilterGroup::eOBJECT1, FilterGroup::ePLAYER);

setupFiltering(object2Shape, FilterGroup::eOBJECT2, FilterGroup::ePLAYER);

This would enable contact notifications (PxPairFlag::eNOTIFY_TOUCH_FOUND) between the player and object1/object2, but not between the player and the heightfield. This is just an example.

An alternative group-based filtering mechanism is provided with source in the extensions library’s PxDefaultSimulationFilterShader.

Please refer to the snippets for additional examples. SnippetTriggers in particular shows how to use the filter shader to emulate triggers using regular non-trigger shapes, and also how to use the more flexible filter callback (described in the next section).

PxSimulationFilterCallback

The most flexible (but also the slowest) way to implement collision filtering is with the PxSimulationFilterCallback. It is the most flexible because contrary to the filter shader, the callback can access any memory and do anything it needs to. It is the slowest because it happens after the broadphase, at the last stage of the filtering pipeline, and it is implemented with regular virtual calls.

The user-defined callback must be passed to PxSceneDesc::filterCallback at scene creation time, or later with PxScene::setSimulationEventCallback(). For performance reasons the callback is not automatically called for each pair returned by the broadphase, the call must be requested inside the previously discussed filter shader using PxFilterFlag::eCALLBACK. This is also why the callback is the slowest filtering mechanism: by design it happens after the filter shader.

The user-defined functions PxSimulationFilterCallback::pairFound() and PxSimulationFilterCallback::pairLost() are called appropriately. Please refer to the functions’ documentation for details. An example of using the filter callback is available in SnippetTriggers.

Aggregates

An aggregate (PxAggregate) is a collection of actors represented as a single entry in the broadphase.

Aggregates do not provide extra simulation or query features, but allow you to tell the SDK that a set of actors will be clustered together, which in turn allows the SDK to optimize its spatial data operations. A typical use case is a ragdoll, made of multiple actors connected by joints. Without aggregates, this gives rise to as many broad-phase entries as there are shapes in the ragdoll, and since by nature these shapes are always close to each other it gives birth to a lot of overlapping pairs, and a lot of internal interaction objects. It is typically more efficient to represent the ragdoll in the broad-phase as a single entity, and perform internal overlap tests in a second pass if necessary.

Another potential use case is a single actor with a large number of attached shapes: using an aggregate for these large compound actors limits the number of internal interaction objects, which can give performance gains.

Creating an Aggregate

Create an aggregate with PxPhysics::createAggregate():

PxPhysics* physics; // The physics SDK object

PxU32 nbActors; // Max number of actors expected in the aggregate

bool selfCollisions = true;

PxAggregateFilterHint hint = PxGetAggregateFilterHint(PxAggregateType::eGENERIC, selfCollisions);

PxAggregate* aggregate = physics->createAggregate(nbActors, hint);

If you will never need internal collisions between the actors of the aggregate, disable them at creation time with the selfCollision flag. This is much more efficient than using the scene filtering mechanism, as it bypasses all internal filtering logic.

Aggregates containing only static or kinematic actors should ideally be created with PxAggregateType::eSTATIC or PxAggregateType::eKINEMATIC. This makes filtering faster, especially when combined with PxPairFilteringMode::eKILL for static-vs-kinematic or kinematic-vs-kinematic pairs.

It is not allowed to enable self collisions on PxAggregateType::eSTATIC aggregates. Both the maximum number of actors and the filtering attributes are immutable.

Populating an Aggregate

Add an actor to an aggregate with PxAggregate::addActor():

PxActor& actor; // Some actor, previously created

aggregate->addActor(actor);

Note that if the actor already belongs to a scene, the call is ignored. Either add the actors to an aggregate and then add the aggregate to the scene, or add the aggregate to the scene and then the actors to the aggregate.

To add the aggregate to a scene (before or after populating it), use PxScene::addAggregate():

scene->addAggregate(*aggregate);

Similarly, to remove the aggregate from the scene, use PxScene::removeAggregate():

scene->removeAggregate(*aggregate);

Releasing an Aggregate

To release an aggregate, just call its release function:

PxAggregate* aggregate; // The aggregate we previously created

aggregate->release();

Releasing an aggregate does not release the aggregated actors. If the aggregate belongs to a scene, the actors are automatically re-inserted in that scene. If you intend to delete both the aggregate and its actors, it is most efficient to release the actors first, then release the aggregate when it is empty.

Amortizing Insertion

Adding many objects to a scene in one frame can be a costly operation. This can be the case for a ragdoll, which as discussed is a good candidate for PxAggregate. Another case is localized debris, for which self-collisions are often disabled. To amortize the cost of object insertion into the broad-phase structure over several, spawn the debris in an aggregate, then remove each actor from the aggregate and and re-insert it into the scene over those frames.

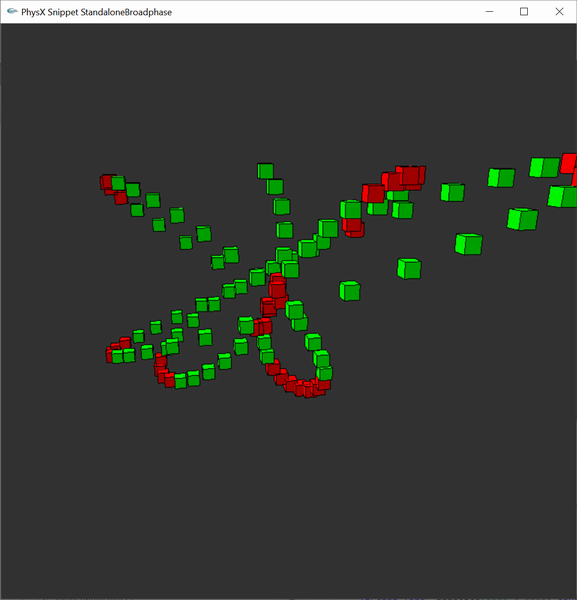

Standalone Broad-phase

Since PhysX 5 the broadphase is also exposed as an “immediate mode” standalone component.

First, create a PxBroadPhaseDesc structure to describe the desired broadphase. It captures all the parameters needed to initialize a broadphase. For the GPU broadphase (PxBroadPhaseType::eGPU) it is necessary to provide a CUDA context manager, which can be created this way:

PxFoundation* foundation = ...;

PxCudaContextManagerDesc cudaContextManagerDesc;

PxCudaContextManager* cudaContextManager = PxCreateCudaContextManager(*foundation, cudaContextManagerDesc, PxGetProfilerCallback());

The kinematic filtering flags (PxBroadPhaseDesc::mDiscardStaticVsKinematic and PxBroadPhaseDesc::mDiscardKinematicVsKinematic) are currently not supported by the GPU broadphase. They are used to dismiss pairs that involve kinematic objects directly within the broadphase.

To create the standalone broadphase, call PxCreateBroadPhase(). Creating a CPU broadphase can be as simple as:

PxBroadPhaseDesc bpDesc(PxBroadPhaseType::eABP);

PxBroadPhase* bp = PxCreateBroadPhase(bpDesc);

The returned PxBroadPhase is a low-level broadphase API that only supports batched updates and leaves most of the data management to users. This is useful if you want to use the broadphase with your own memory buffers. Note however that the GPU broadphase works best with buffers allocated in CUDA memory. The PxBroadPhase::getAllocator() function returns an allocator that is compatible with the selected broadphase. It is recommended to allocate and deallocate the broadphase data (bounds, groups, distances) using this allocator.

Note

for CPU broadphases it must be safe to load 4 bytes past the end of the provided bounds array.

PxAABBManager is an easier-to-use broadphase interface that automatically deals with these requirements. You can create an AABB manager after creating the broadphase object, with the PxCreateAABBManager() function. This high-level broadphase has a more traditional one-object-at-a-time API and does the proper data management automatically.

Usage of the standalone broadphase is demonstrated in SnippetStandaloneBroadphase.

Note that to take advantage of multithreaded CPU implementations it is necessary to call PxBroadPhase::update() with a PxBaseTask continuation task. It is legal to pass NULL as a continuation task, but in this case the CPU broadphases will run single-threaded (even PxBroadPhaseType::ePABP). The GPU broadphase does not have this restriction.

Here is an example of how to run a standalone multithreaded CPU broadphase:

// Init time:

// Create CPU dispatcher and task manager

const PxU32 nbThreads = 8;

PxDefaultCpuDispatcher* dispatcher = PxDefaultCpuDispatcherCreate(nbThreads);

PxTaskManager* taskManager = PxTaskManager::createTaskManager(*this, dispatcher);

// Create a standalone broadphase

PxBroadPhase* bp = ...;

// Each frame:

// Start the task manager

taskManager->resetDependencies();

taskManager->startSimulation();

// Gather update data for this frame

const PxBroadPhaseUpdateData bpData = ...;

// Multithreaded broadphase update

{

PxSync sync;

sync.reset();

TaskWait taskWait(&sync);

taskWait.setContinuation(*taskManager, NULL);

bp->update(bpData, &taskWait);

taskWait.removeReference();

sync.wait();

// Make sure you fetch the results after sync.wait() is done

PxBroadPhaseResults results;

bp->fetchResults(results);

}

// Stop the task manager

taskManager->stopSimulation();

// Cleanup time:

PX_RELEASE(bp);

PX_RELEASE(taskManager);

PX_RELEASE(dispatcher);

And the waitTask object can be for example:

class TaskWait: public PxLightCpuTask

{

public:

TaskWait(PxSync* sync) : PxLightCpuTask(), mSync(sync) {}

virtual void run() PX_OVERRIDE

{

}

PX_INLINE void release() PX_OVERRIDE

{

PxLightCpuTask::release();

mSync->set();

}

virtual const char* getName() const PX_OVERRIDE

{

return "TaskWait";

}

private:

PxSync* mSync;

};

The standalone broadphase does not necessarily operates on shapes or actors, it is up to users to define what the bounds represent. It also does not generate interaction objects for returned pairs, it is up to users to implement this if needed.